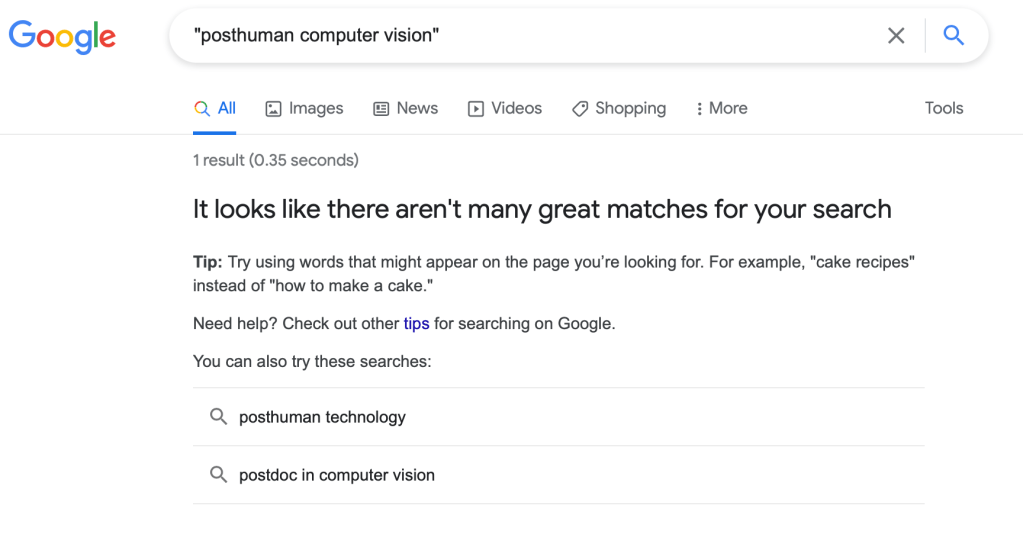

As I have been writing (albeit infrequently) here, my current interest is with the concept of “posthuman computer vision.” It is apparently a term that is not in wide use. I’m not sure if that’s good or bad news for me from a rhetorical perspective. Obviously computer vision has been a field of research for 50-60 years and posthuman theory has been around since the early 1990s, if not longer. And to be fair, if you type in posthuman computer vision without the quotes, you get something like 18K results on Google Scholar. In short, there are folks out there sharing my interest even if they aren’t quite using this term. And even if the term is itself unused, the concept is easy to discern.

You take the underlying concepts of posthuman theory–breaking down the divisions between nature and culture, humans and nonhumans, etc. And then you add on the basic premise of computer vision–building computers that can see as humans do and/or expand our capacities for sight. The fundamental results are:

- Vision is always-already an assemblage that enfolds a capacity to sense light waves with a stimulus response. These mechanisms can be biological (or not). They can also involve semiotics/coding (or not).

- Posthuman computer vision then specifically examines these capacities as they occur at the intersections among digital computers, their technical networks, their human users, and the world they collectively perceive.

Braidotti writes that “the posthuman condition implies that ‘we’ the human and non-human inhabitants of this particular planet–are currently positioned between the Fourth Industrial Revolution and the Sixth Extinction. Yes, we are in this together: between the algorithmic devil and the acidified deep blue sea” (Posthuman Knowledge, 2). A little dramatic maybe, but in this context what’s interesting is how that positioning requires posthuman computer vision. From a strictly technical perspective, the fourth industrial revolution (e.g. automation, robotics, etc.) is not possible without computer vision to guide robotic factory processes, steer autonomous vehicles, and provide feedback to other processes that still include humans in short-term loops. Similarly, our understanding of the anthropocene and climate change (i.e. the sixth extinction to refer to Elizabeth Kolbert’s book as Braidotti does) is not possible without computer vision to provide maps and simulations of complex, global ecological phenomena.

I’ve been thinking about this in terms of assemblage theory, radical media archeology, and Deleuze and Guattari’s concept of strata (see “Geology of Morals” in A Thousand Plateaus). Without showing all of my work, I arrive at something like the following:

- Assemblages of posthuman computer vision construct a unique superlinear spatiotemporality deriving from their capacity to make space and time not only coded/symbolic (as language does) but calculable and subject to mathematic-algorithmic manipulation.

I should interject here that all assemblages have their own spatiotemporality that arises from their material conditions. Individual humans have their own senses of space and time. We are connected as members of a population that comprises our species (which has existed for millennia and spans the globe so obviously it has its own space and time). Cosmological assemblages have space and time that are constructed by gravity in ways we don’t fully understand (and certainly I don’t fully understand). And we are connected to all of those but in ways that affect us without being deterministic. E.g., I am affected by gravity but it doesn’t determine my life. I am affected by being part of a species but it doesn’t determine my life.

This is where I find bringing the concept of strata in assemblage theory is useful (and Ian Buchanan’s Assemblage Theory and Method has been helpful to me in this area). Basically, in “Geology of Morals,” D&G define three strata: geological, biological, and techno-semiological. Assemblages pass through the strata, linking them together in different ways. I’ve been conceiving of the digital-calculative as a substratum of the techno-semiological stratum.

I’m not going to delve into the complexities of that here, but I liked how Buchanan also observed that D&G use strata to talk about various layers of our lives. And here I think it becomes easier to perceive the digital-calculative as a new substrata/layer as anyone who was an adult prior to the 21st century can probably recall a time when their lives didn’t have a digital layer. And we probably can also reflect on how the last decade has introduce an algorithmic layer–counting steps, GPS map directions, smartphone notifications,, website recommendations, etc. We gain both new capacities and new obligations; we become empowered by being plugged into things. In many respects that’s not new, but the specific materiality and spatiotemporality of computer vision in creating a calculable visual world is.

From that observation, there are many directions to follow, many different instances of posthuman computer vision. So I’m thinking its a fruitful territory. (And obviously the same could be done with our other senses.)

Leave a comment