Last month at the Conference for College Composition and Communication, the national conference in the discipline of rhetoric and composition, the following resolution was passed “we affirm the rights of students and teachers to refuse to sign up for, prompt, or otherwise use generative AI in the writing classroom.” The resolution articulates several concerns that I would summarize in the following way. It argues that writing instruction’s responsibility is to pay attention to technological change without having corporate tools or narrow workforce-training goals imposed upon its pedagogy or curriculum. Students and instructors have the right to refuse that imposition. This is a matter of academic freedom. In the terms of the resolution, AI refusal is an act of conscience. As most writing instructors are contingent faculty, insisting on these protections at the disciplinary level is important for rhetoric and composition.

Unlike other disciplinary positions on generative AI, which often focus on its use in published research, this resolution focuses on pedagogy and curriculum. It doesn’t assert that AI should never be taught. It doesn’t assert a refusal to study AI in its entirety. Instead, it asserts the right of instructors to refuse the imposition of a particular perspective and narrative regarding AI. The resolution is narrow in this respect, but it opens a larger question about the relation of faculty, particularly contingent faculty, to demands to adopt a predetermined curriculum, particularly those aligned with political or market-driven objectives.

This larger question also asks how might we proceed in good conscience in relation to AI, particularly in terms of pedagogy and curriculum? Conscience relies on shared knowledge as the basis of judgment; in turn, conscience arises from the judgment of what is and is not shared. In short, conscience requires a refusal of the unconscionable. My interest here is in how refusal and conscience diagram pedagogy and curricular approaches to AI.

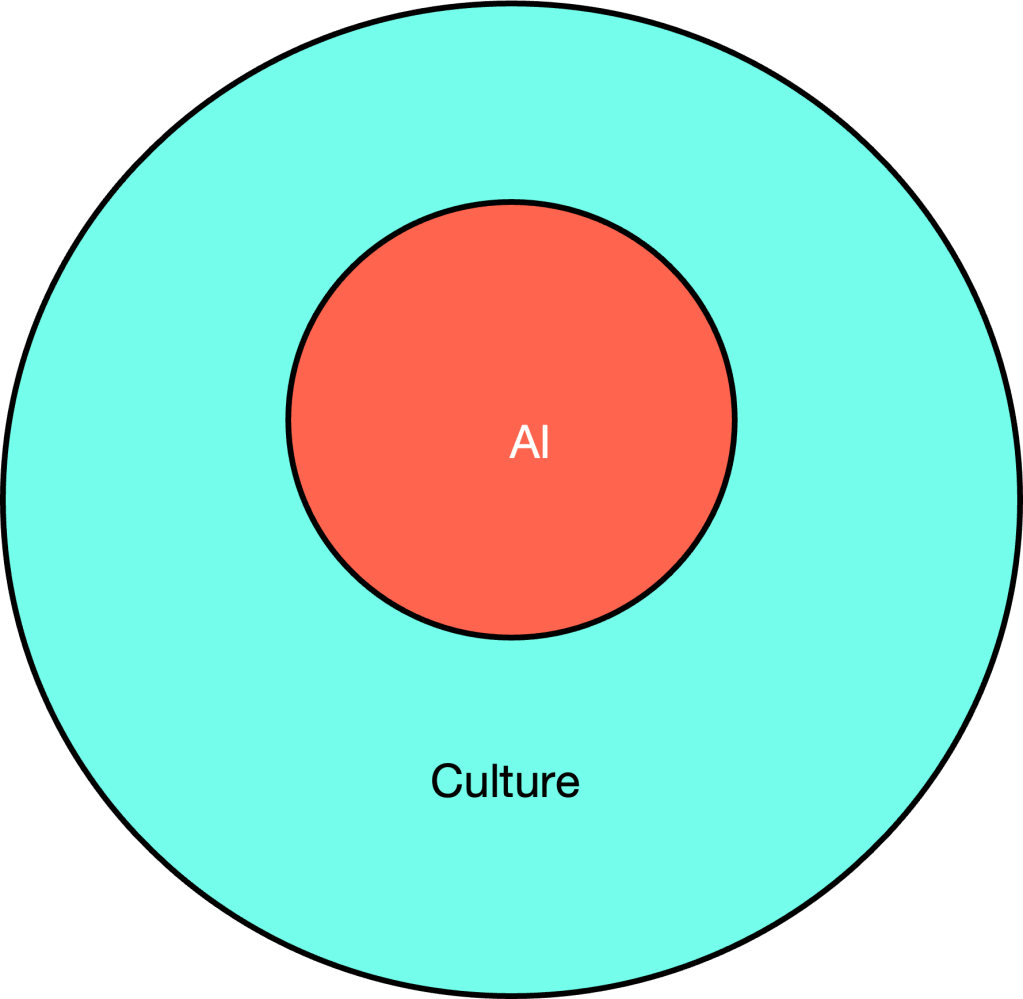

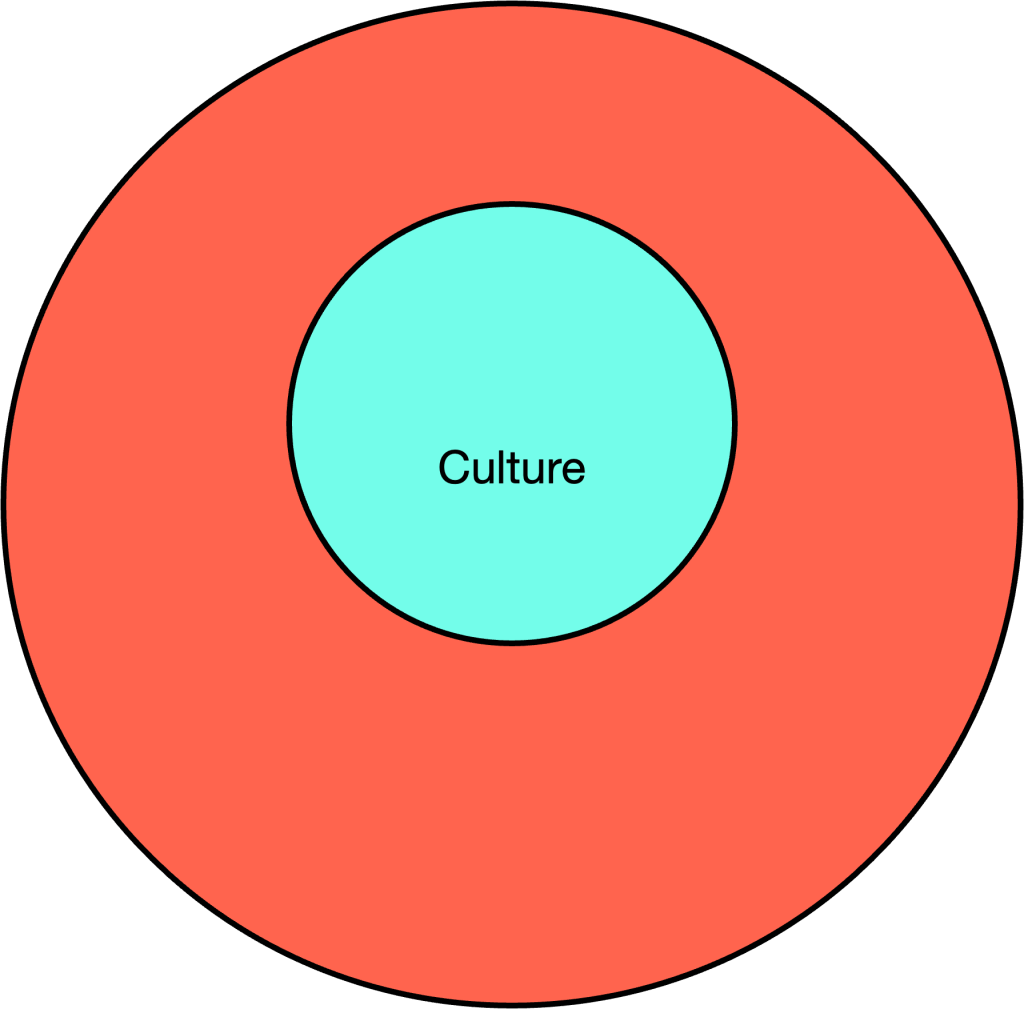

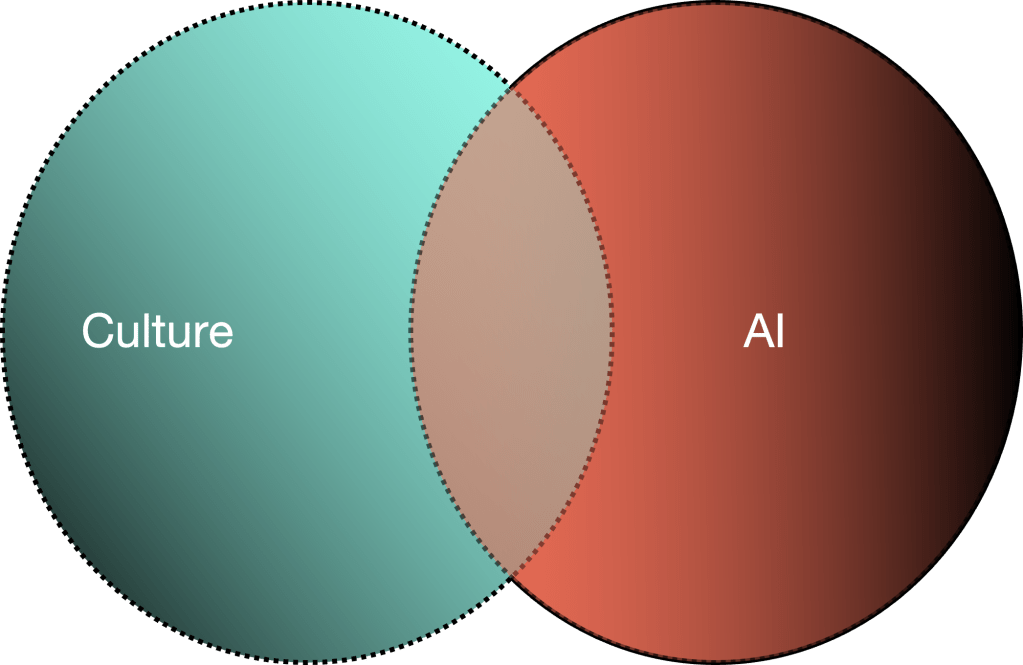

For illustrative purposes, I have three Venn diagrams describing different relations between AI and culture. My contention is that we can hold only one of these positions and must refuse the others. This necessity of refusal is not mandated by logic as much as it is conscience. That said one might refuse all three of these and many other possible relations as well. And I want to speak about them specifically in terms of pedagogy and curriculum.

In figure one, AI exists within culture. In this figure, artificial intelligence is part of a material-cultural history. AI shapes and is shaped by culture. AI is part of a history of computation, technology, and media, as well as nationalism, capitalism and so on. Each perspective addresses a different artificial intelligence or addresses AI differently, if we imagine there is a single “AI” out there to address. If we accept this diagram, then I would ask how it describes our shared knowledge. That is, how it situates us in relation to one another and the teaching of AI.

Figure two appears opposite to figure one, but it isn’t that. If figure one is primarily a material and historical relation. Figure two is a knowledge claim. It indicates that culture can be fully known by AI. At its maximum, general artificial intelligence asserts that all that can be known by humans can be calculated and represented in AI. A different, less maximal version of AGI would settle for output that can serve as an efficient proxy for human cognitive labor. In the weaker case, the argument is not that AI represents all that humans can think, but rather that it outcompetes human cognition in the market. Again, we can ask how this diagram describes our shared knowledge and how it situates us in relation to one another and the teaching of AI.

If my claim is accurate, then it is not possible to accept both positions. At least, one must be refused. Or more accurately, it is unconscionable to accept both. For if we recognize AI as a product of materiality, culture and history, then it would be unconscionable to pursue its reduction within the operations of a particular machine. If conscience begins with shared knowledge, then it requires a shared response-ability. That is, it requires both a capacity to recognize those with whom we share knowledge but also a commitment to that shared space.

Figure 3 models this ethics of sharing knowledge, or conscientious exchange. It is also a familiar model of interdisciplinary interaction, but it is not always as easy as it seems. As Isabelle Stengers warns “the idea that we belong to a tradition that is doomed to define other peoples as entertaining mere beliefs, or nature as a mere resource, is a very infectious one, which you meet everywhere. It breeds guilt and poisons our capacity to resist” (Another Science is Possible 155-6). That is, we paralyze ourselves with this assertation. That said, for Stengers,

The idea is not to transcend the particularity of the so-called modern tradition, but to think with this particularity, to induce the capacity to imagine the possibility that it can be regenerated or civilised – which does not mean universalised. On the contrary, it means thinking with its own specific, dangerous and never innocent ways of weaving relations. (156)

In developing a pedagogical and curricular conscience regarding AI, we must proceed with an awareness of our “never innocent ways.” Where AI and culture address one another, their conscience must include a capacity for refusal. Refusal, when undertaken as an act of conscience, holds open the potential for another future. If we recognize the pragmatism in not foreclosing future thought, then we also recognize our shared knowledge, and thus our conscientiousness, is incomplete. Staying with the trouble of our never innocent ways to resolve disputes ultimately means developing a conscience for the sustainability of difference over the triumph of a particular universalization.

The 4Cs resolution insists we recognize our colleagues and students exercising good conscience in their acts of AI refusal. The alternative of this recognition would be a refusal of its own: a refusal of the refusers. That judgment is then reflected as the refusers of refusers of the refusers, and so on. In our 24/7 networked world, what I am describing is already history. It has happened and continues happen.

Stengers proposes an alternative approach to sharing knowledge and developing a conscience. My point is that for the pedagogical and curricular engagement with artificial intelligence to proceed in good conscience it must begin with a recognition of conscientious objection.

Leave a comment